From this recent reddit comment thread / blog post, I had the idea of generalizing the operators found in the Data.Composition package. This could be a bad idea, as it encourages code to have larger, scarier operators, but I think I decided upon some interesting conventions. These operators can be used to construct pointfree expressions in a somewhat more straightforward, less nested fashion.

I brought it up on #haskell to mixed reactions. The following quote is now attributed to me via lambdabot by ski:

(on pointless black magic)

<mgsloan> welcome to excessively pointless plumbing operators :)

<byorgey> mgsloan: that's... terrifying

<DanBurton> you should put it on hackage

So I did! I cleaned up the library and put it on hackage. Here’s how it works:

Pair Plumber

(*^) :: r' -> r'' -> a -> (r', r'')

(*<) :: (a -> r') -> r'' -> a -> (r', r'')

(*>) :: r' -> (a -> r'') -> a -> (r', r'')

(*&) :: (a -> r') -> (a -> r'') -> a -> (r', r'')

(**) :: (a -> r') -> (b -> r'') -> (a, b) -> (r', r'')

(*^) f1 f2 _ = (f1, f2 ) -- Drop parameter

(*<) f1 f2 a = (f1 a, f2 ) -- Left gets parameter

(*>) f1 f2 a = (f1, f2 a) -- Right gets parameter

(*&) f1 f2 a = (f1 a, f2 a) -- Both get parameter

(**) f1 f2 (a, b) = (f1 a, f2 b) -- Split tuple

The first two parameters are functions which are applied to the remainder of the parameters, in a fashion requested by the symbol after the initial “*”. These symbols specify a routing – which functions each parameter is routed to – leading to the name “plumbing”. Here’s one downside of this naming scheme – (**) is Floating exponentiation in the Prelude – so modules that fully import this library need to import the Prelude hiding (**).

If these operators were generalized to arrows, which they could be, then (**) would be the same thing as (***), and (*&) would be the same thing as (&&&). So what’s (***) being used for now?

(***) :: (a -> c -> r') -> (b -> d -> r'') -> (a, b) -> (c, d) -> (r', r'')

(***) f1 f2 (a, b) (c, d) = (f1 a c, f2 b d)

It’s the generic zip on tuples! The additional ‘*’ indicates that an additional tuple parameter should be split between the functions. This version of (***) is something I often want, and have added it, under the name zipT (though bizip is probably a better name), to project-specific utilities libraries a few times.

If this is the extended version of (Control.Arrow.***), then what’s the extended version of (Control.Arrow.&&&)?

(*&&) :: (a -> b -> r') -> (a -> b -> r'') -> a -> b -> (r', r'')

(*&&) f1 f2 a b = (f1 a b, f2 a b)

We can also mix & and * in a couple ways:

(*&*) :: (a -> b -> r') -> (a -> c -> r'') -> a -> (b, c) -> (r', r'')

(*&*) f1 f2 a (b, c) = (f1 a b, f2 a c)

(**&) :: (a -> c -> r') -> (b -> c -> r'') -> (a, b) -> c -> (r', r'')

(**&) f1 f2 (a, b) c = (f1 a c, f2 b c)

Never before seen combinators, as far as I know, but I think they are reasonably understandable with a little practice. In theory, I’m defining a naming scheme for an infinite set of related function definitions. In practice, only plumbers up to arity 3 are defined by default – you can invoke a Template Haskell function to generate more if you need them.

Examples

Some examples of using these functions:

λ> (+1) ** (*2) $ (9, 4)

(10, 8)

λ> ((++) *** (++)) ("a", "b") (" forest", "ird")

("a forest", "bird")

λ> (maybe (:[]) replicate *<& length) (Just 3) "hi"

(["hi","hi","hi"], 2)

(11, 20) == ((+1) *& (*2)) 10

(12, 20) == ((+) *&& (*) ) 10 2

(13, 20) == ((+) *&>< (*) ) 10 2 3

(12, 30) == ((+) *&<> (*) ) 10 2 3

(12, 40) == ((+) *&< (*4)) 10 2

(14, 20) == ((+4) *&> (*) ) 10 2

Composition Plumber

($^) :: ( r'' -> r') -> r'' -> a -> r' -- Drop parameter

($<) :: (a -> r'' -> r') -> r'' -> a -> r' -- Left gets parameter

($>) :: ( r'' -> r') -> (a -> r'') -> a -> r' -- Right gets parameter

($&) :: (a -> r'' -> r') -> (a -> r'') -> a -> r' -- Both get parameter

($*) :: (a -> r'' -> r') -> (b -> r'') -> (a, b) -> r' -- Split tuple

($>) f1 f2 _ = f1 $ f2 -- Drop parameter

($<) f1 f2 a = f1 a $ f2 -- Left gets parameter

($>) f1 f2 a = f1 $ f2 a -- Right gets parameter

($&) f1 f2 a = f1 a $ f2 a -- Both get parameter

($*) f1 f2 (a, b) = f1 a $ f2 b -- Split tuple

The definitions are exactly the same as in the pair plumber, except using the ($) function to combine the arguments, instead of (,). Ordinary composition is "$>" in this system, as it combines the functions using "$", and provides the parameter to the function on the right. All of these operators have "infixr 9" priority, to match with ordinary composition.

λ> :t (.)

(.) :: (b -> c) -> (a -> b) -> a -> c

λ> :t ($>)

($>) :: r'' -> r') -> (a -> r'') -> a ->r'

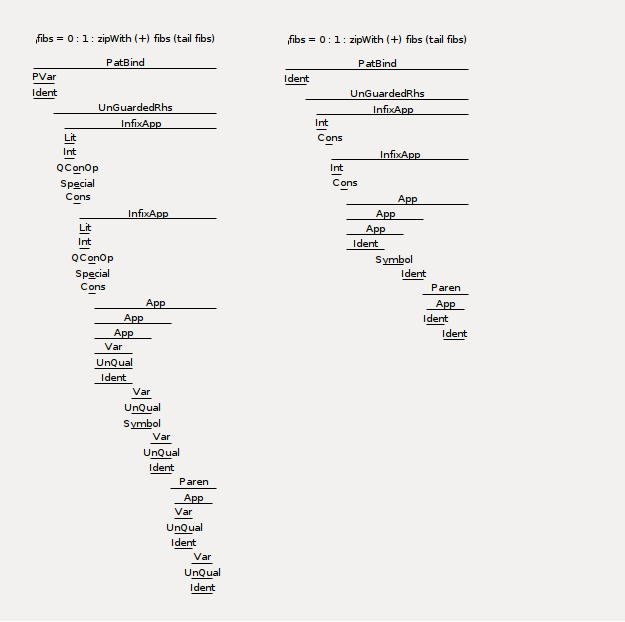

PNorm Example

Let's say we want to implement the p-norm on lists. This works by exponentiating each element of a list by p, summing, and exponentiating by 1 / p. Standard, cartesian distance is the p = 2 norm.

pow = flip (Prelude.**)

pnorm p xs = pow (1 / p) (sum (map (pow p) xs))

Here's how I'd normally write this function:

pnorm p = pow (1 / p) . sum . map (pow p)

But now we can go further! Without descending into the full points-free madness of

pnorm = ap ((.) . pow . (1 /)) ((sum .) . map . pow)

We can instead use the plumbers variant.

pnorm = (pow $> (1/)) $&> sum $>> map $> pow

-- (pow $> (1/)) $&>(sum $>> map($> pow)) -- infixr 9

-- /----^ p xs ^ /---^

-- | | | | |

-- \-------| \------=--/ |

-- | |

-- \---------=------/

The ascii illustration shows how the plumbing operators route the parameters. The arrow for xs may be a little confusing. That parameter is being provided to the result of (map $> pow), because ($>>) is expecting something that uses two arguments (apart from functions) and ($>) = (.) only has one.

What if we want to normalize according to a given pnorm?

pnormalized = (flip map $<> (/)) $>& pnorm

-- ^ p xs ^ ^

-- | | | | |

-- \--=-------|-\---/ |

-- | |

-- \-------/

List Cons Example

On IRC, ski suggested that such higher-arity combinators should be systematically decomposable into the others, and gave the following points-free example:

list3 :: a -> a -> a -> [a]

list3 = ($ []) .:: (.: (:)) .: (.: (:)) . (.: (:)) id

(.:) :: (b -> c) -> (a -> a1 -> b) -> a -> a1 -> c

(.:) = (.) . (.)

(.::) :: (b -> c) -> (a -> a1 -> a2 -> b) -> a -> a1 -> a2 -> c

(.::) = (.) . (.) . (.)

Instead of figuring out how to express these combinators in terms of the others (I might give some of these definitions / identities in a later post - omitted for brevity and convenience), I gave an equivalent definition of list3 using plumbers:

list3 = ((:) $<>> (:) $<> (:[]))

(.:) = ($>>)

(.::) = ($>>>)

Generalizing this to four is easy. However, the module doesn't currently export arity greater than three, as the compile time was longish, and the binary was 1MB. If you want these operators, you can use Control.Plumbers.TH to request their implementation.

list4 = ((:) $<>>> (:) $<>> (:) $<> (:[]))

Turns out that the expression of this can get even more uniform:

<ski> (could you separate `(:[])' into a `(:)' and a `[]', for uniformity ?)

<mgsloan> ((:) $<>>> (:) $<>> (:) $<> (:) $< []) 1 2 3 4

***ski claps

Something interesting to observe is that when using plumbing operators on cons, just by changing the operators involved, we can get out any 3-list that consists of the passed parameters:

λ> ((:) $<>> (:) $<> (:[])) 1 2 3

[1,2,3]

λ> ((:) $<>> (:) $<> (:) $< []) 1 2 3

[1,2,3]

λ> ((:) $<>> (:) $>< (:) $< []) 1 2 3

[1,3,2]

λ> ((:) $><> (:) $>< (:) $< []) 1 2 3

[2,3,1]

λ> ((:) $>>< (:) $>< (:) $< []) 1 2 3

[3,2,1]

λ> ((:) $>&^ (:) $>< (:) $< []) 1 2 3

[2,2,1]

λ> ((:) $&>^ (:) $>< (:) $< []) 1 2 3

[1,2,1]

Implementation

Here's the main body of Control.Plumbers:

$(implementPlumbers compositionSpec)

infixr 9 $^, $<, $>, $&, $*

infixr 9 $^^, $^<, $^>, $^&, $^*, $<^, $<<, $<>, $<&, $<*, $>^, $><, $>>, $>&, $>*, $&^, $&<, $&>, $&&, $&*, $*^, $*<, $*>, $*&, $**

infixr 9 $^^^, $^^<, $^^>, $^^&, $^^*, $^<^, $^<<, $^<>, $^<&, $^<*, $^>^, $^><, $^>>, $^>&, $^>*, $^&^, $^&<, $^&>, $^&&, $^&*, $^*^, $^*<, $^*>, $^*&, $^**, $<^^, $<^<, $<^>, $<^&, $<^*, $<<^, $<<<, $<<>, $<<&, $<<*, $<>^, $<><, $<>>, $<>&, $<>*, $<&^, $<&<, $<&>, $<&&, $<&*, $<*^, $<*<, $<*>, $<*&, $<**, $>^^, $>^<, $>^>, $>^&, $>^*, $><^, $><<, $><>, $><&, $><*, $>>^, $>><, $>>>, $>>&, $>>*, $>&^, $>&<, $>&>, $>&&, $>&*, $>*^, $>*<, $>*>, $>*&, $>**, $&^^, $&^<, $&^>, $&^&, $&^*, $&<^, $&<<, $&<>, $&<&, $&<*, $&>^, $&><, $&>>, $&>&, $&>*, $&&^, $&&<, $&&>, $&&&, $&&*, $&*^, $&*<, $&*>, $&*&, $&**, $*^^, $*^<, $*^>, $*^&, $*^*, $*<^, $*<<, $*<>, $*<&, $*<*, $*>^, $*><, $*>>, $*>&, $*>*, $*&^, $*&<, $*&>, $*&&, $*&*, $**^, $**<, $**>, $**&, $***

$(implementPlumbers productSpec)

infixr 9 *^, *<, *>, *&, **

infixr 9 *^^, *^<, *^>, *^&, *^*, *<^, *<<, *<>, *<&, *<*, *>^, *><, *>>, *>&, *>*, *&^, *&<, *&>, *&&, *&*, **^, **<, **>, **&, ***

infixr 9 *^^^, *^^<, *^^>, *^^&, *^^*, *^<^, *^<<, *^<>, *^<&, *^<*, *^>^, *^><, *^>>, *^>&, *^>*, *^&^, *^&<, *^&>, *^&&, *^&*, *^*^, *^*<, *^*>, *^*&, *^**, *<^^, *<^<, *<^>, *<^&, *<^*, *<<^, *<<<, *<<>, *<<&, *<<*, *<>^, *<><, *<>>, *<>&, *<>*, *<&^, *<&<, *<&>, *<&&, *<&*, *<*^, *<*<, *<*>, *<*&, *<**, *>^^, *>^<, *>^>, *>^&, *>^*, *><^, *><<, *><>, *><&, *><*, *>>^, *>><, *>>>, *>>&, *>>*, *>&^, *>&<, *>&>, *>&&, *>&*, *>*^, *>*<, *>*>, *>*&, *>**, *&^^, *&^<, *&^>, *&^&, *&^*, *&<^, *&<<, *&<>, *&<&, *&<*, *&>^, *&><, *&>>, *&>&, *&>*, *&&^, *&&<, *&&>, *&&&, *&&*, *&*^, *&*<, *&*>, *&*&, *&**, **^^, **^<, **^>, **^&, **^*, **<^, **<<, **<>, **<&, **<*, **>^, **><, **>>, **>&, **>*, **&^, **&<, **&>, **&&, **&*, ***^, ***<, ***>, ***&, ****

$(implementPlumbers ...) invokes a template haskell function which generates all of the function declarations. All of those "infixr 9" declarations should really be unnecessary - you can't create them with Template Haskell yet. See this GHC bug - which simonpj recently created a fix for! Props to him for fixing stuff like that! Until that fix is included in a GHC release, though, I'll leave these fixity declarations around.

You can create your own plumbing operators by using the following interface from Control.Plumbers.TH:

-- | Specifies all of the information needed to construct type declarations

-- for the plumber.

data PlumberTypes = PlumberTypes

{ leftType :: Type -- ^ Type of the left argument's result

, rightType :: Type -- ^ Type of the right argument's result

, resultType :: Type -- ^ Results type. This needs to be wrapped in a

-- forall naming all of the utilized type variables.

}

-- | A basic set of types, which make r' the left type, and r'' the right type.

-- The resultType is a forall that introduces these type variables, and has

-- undefined content. Therefore any implementation in terms of baseTypes

-- needs to redefine resultType, as the Forall has undefined as its content.

baseTypes :: PlumberTypes

baseTypes = PlumberTypes

{ leftType = mkVT "r'"

, rightType = mkVT "r''"

, resultType = ForallT [mkVB "r'", mkVB "r''"] [] undefined

}

-- | Specifies all of the information needed to implement a plumber.

data PlumberSpec = PlumberSpec

{ plumberOpE :: Exp -> Exp -> Exp -- ^ The plumber implementation

, plumberTypes :: Maybe PlumberTypes -- ^ Optional explicit type signatures

, plumberArities :: [Int] -- ^ Arities to generate - 26 is max

, plumberPrefix :: String -- ^ Prefix to use for operator

}

-- | Creates a plumber spec for the given prefix for the generated operators,

-- and the name of the infix operator to use to construct the implementation.

baseSpec :: String -> String -> PlumberSpec

baseSpec p e = PlumberSpec

{ plumberOpE = (\l r -> InfixE (Just l) (mkVE e) (Just r))

, plumberTypes = Nothing

, plumberArities = [1..3]

, plumberPrefix = p

}

The operators, along with those that are exported by Control.Plumbers.Monad are defined in Control.Plumbers.Specs as follows:

productSpec :: PlumberSpec

productSpec = (baseSpec "*" "_") { plumberTypes = Just productTypes

, plumberOpE = (\l r -> TupE [l, r]) }

compositionSpec :: PlumberSpec

compositionSpec = (baseSpec "$" "$") { plumberTypes = Just compositionTypes }

lbindSpec :: PlumberSpec

lbindSpec = (baseSpec "<=" "=<<") { plumberTypes = Just lbindTypes }

rbindSpec :: PlumberSpec

rbindSpec = (baseSpec ">=" ">>=") { plumberTypes = Just rbindTypes }

frbindSpec :: PlumberSpec

frbindSpec = (baseSpec ">>" ">>") { plumberTypes = Just $ fbindTypes False }

flbindSpec :: PlumberSpec

flbindSpec = (baseSpec "<<" "<<") { plumberTypes = Just $ fbindTypes True }

productTypes :: PlumberTypes

productTypes = addBaseContext $ baseTypes

{ resultType = tuplesT [leftType baseTypes, rightType baseTypes] }

compositionTypes :: PlumberTypes

compositionTypes = addBaseContext $ baseTypes

{ leftType = arrowsT [rightType baseTypes, leftType baseTypes]

, resultType = leftType baseTypes

}

This leaves the library open to others defining plumbing operators following the same conventions.

Thoughts?

I think that this family of operators has a very memorable and visual notation, and can be put to reasonable. I'm not set on all of these decisions, though - the notation may change in order to avoid collisions with (**) and the arrow operators.

What do people think? Is this awful? Useful? Are the symbol choices good? Other suggestions? It's something I've itched for often in past times when points-free style reaches slightly beyond its reasonable limit.